The artificial intelligence field is changing the world at a rapid pace. We see that Large Language Models sit at the center of this change. These powerful AI systems know how to read, write & understand human language. The user may already use a Large Language Model without even knowing it. We can see some tools which are publicly available, like ChatGPT, Google Gemini & Claude, run on these systems. It raises a simple question about how they actually work.

- What Are Large Language Models?

- What Are the Key Characteristics of Large Language Models?

- How Do Large Language Models Learn?

- What Is the Role of Neural Networks in Large Language Models?

- What Is the Transformer Architecture in Large Language Models?

- What Are the Popular Large Language Models and Their Key Features?

- How Do Large Language Models Generate Text?

- What Are the Real World Applications of Large Language Models?

- Customer Support & Chatbots

- Healthcare & Medical Research

- Education & Personalized Learning

- Content Creation & Marketing

- What Are the Limitations and Challenges of Large Language Models?

- What Is the Future of Large Language Models?

- Conclusion

In this article, readers will gain insights into the Large Language Models Explained: How LLMs Work featured on BFM Times.

Related: What is Generative AI and How Does It Work?

What Are Large Language Models?

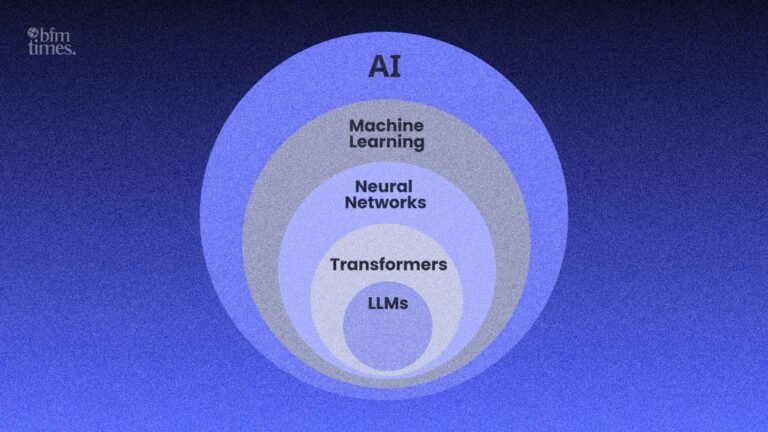

The Large Language Models are a type of artificial intelligence. They learn from the very large text data sets, which are provided to them. This learning helps them understand & then they produce human-like language. The word large shows the size of the model. These models contain billions of parameters. These parameters act like settings that guide the model in making decisions.

They can perform many of the useful tasks. These models can answer questions, write essays, translate languages, summarize content & even write computer code more perfectly than humans. They become very useful tools in daily life.

What Are the Key Characteristics of Large Language Models?

The Large Language Models share several of the common traits. They train on large & diverse text data. They use deep learning methods to detect patterns in their language. They handle many tasks using a single system.

These models improve as time passes. We see that they are fed with more data & the stronger computers help them become smarter & produce more accuracy.

How Do Large Language Models Learn?

The Large Language Models learn through a training process. They read billions of words from books, websites & other sources during their training. This process helps the system find patterns in the language it is being trained on.

The training process includes two main stages. The first stage is pre-training, where the model learns general language patterns. The second stage is the fine-tuning, where the model adjusts for specific tasks when needed.

What Is the Role of Neural Networks in Large Language Models?

The Large Language Models run on neural networks. These systems copy the basic idea of how the human brain processes this information. They include layers of nodes that process data step by step.

The transformer neural network plays the most important role. It appeared in 2017 & it changed the artificial intelligence field. These transformers now serve as the main structure of any of the modern LLM systems.

Suggested: Fine-Tuning vs Prompt Engineering: What Enterprises Should Choose

What Is the Transformer Architecture in Large Language Models?

The transformer uses a method which is called self-attention. This system helps the model focus on the most important words in a sentence. It allows the model to understand the context better than the available older systems.

The sentence example explains this idea clearly. “The bank by the river is flooded” shows how meaning actually works. It is understood that the word bank refers to a river bank using the nearby words.

What Are the Popular Large Language Models and Their Key Features?

| LLM Name | Developer | Parameters Approx. | Key Strength |

| GPT4o | OpenAI | Undisclosed 1T | Multimodal reasoning |

| Gemini 1.5 Pro | Google DeepMind | Undisclosed | Long context window |

| Claude 3.5 Sonnet | Anthropic | Undisclosed | Safety & accuracy |

| LLaMA 3 | Meta AI | 70B to 405B | Open source flexibility |

| Mistral Large | Mistral AI | 123B | Efficient performance |

How Do Large Language Models Generate Text?

The process begins when a user asks a question. The model does not search for a stored answer. It predicts the next word using the patterns that they have learned during their training.

This simple example will explain the process much more clearly. “The sky is lead,” the model predicts that blue is likely the next word. It continues predicting the words until a complete answer appears in front of it.

The entire process happens at a very fast speed. We see that this speed gives the feeling that the model truly understands the question. Then it performs the advanced pattern recognition and throws the output.

Tokenization: Breaking Language Into Pieces

The Large Language Models break the text into smaller parts, which are referred to as tokens, before the processing starts. These tokens can represent a word, part of a word, or even a punctuation.

The example helps explain the process much more clearly. The word running may split into run & ning. This token method helps the system handle many words way more efficiently.

What Are the Real World Applications of Large Language Models?

The Large Language Models appear across many of the industries. They create a strong impact in many areas every year. The real world uses still continue to grow quickly and bigger.

Customer Support & Chatbots

The companies use these LLM systems to power customer support through chatbots. These systems answer questions all day without the need for any breaks and are available 24/7. They understand the human natural language & then they provide helpful replies instantly.

Healthcare & Medical Research

The doctors & researchers apply LLM systems in medical analysis. They examine medical records & find patterns in certain patient data quickly and efficiently. They assist with the drug research & then with the medical writing of the documentation.

Education & Personalized Learning

The Large Language Models bring major change to the education field. The AI tutors now provide personal help to students to help them understand the topic. They explain topics in simple ways & if needed, they adjust to each learner’s pace.

Content Creation & Marketing

The writers, marketers, & the businesses use these LLM tools to produce their content much faster. They create blog posts, social media captions & email newsletters. They save many hours of work each day using these LLM tools.

Also Read: AI vs Traditional Healthcare: How Medical Technology Is Changing

What Are the Limitations and Challenges of Large Language Models?

The Large Language Models still face many of the several limits. The understanding of these challenges remains important. They help people use these tools responsibly.

Hallucinations & Misinformation

The LLM systems sometimes produce incorrect information with strong confidence. This problem is called hallucination. It occurs because the model predicts language patterns instead of verifying any of the facts.

The users must check important information carefully on their own. We advise that people avoid using AI alone for critical medical, legal, or financial decisions without any expert present to review them.

Bias in Training Data

The Large Language Models learn from the internet data. This data can include bias. They may produce unfair or biased responses in certain situations. The researchers continue working to reduce these issues. They focus on improving training data quality & the diversity of their models.

High Computational Costs

The training of Large Language Models needs very strong computers. It also requires large amounts of energy to run them. This situation makes the LLM development expensive & then it even raises environmental concerns.

What Is the Future of Large Language Models?

The future of Large Language Models looks pretty exciting. They continue to improve quickly as technology advances. New improvements appear every year, bringing major updates to these models.

The multimodal models are becoming more common. These systems process images, audio, video & text together. They become more powerful & flexible.

The smaller efficient models also gain popularity. They require fewer computing resources but still deliver strong and accurate results. They become easier to use in many of the new systems.

The governments around the world are beginning to create rules for AI systems. These rules aim to keep technology safe & be responsible. They influence how LLM technology grows in the future.

Conclusion

At last, we can conclude that the Large Language Models represent one of the most powerful technologies today. They change how people used to communicate, work, learn & create. The systems built on the transformer technology already impact many of the industries. They also bring challenges that require careful use. The continued progress of Large Language Models will shape the future of the coming technology. They offer valuable tools for students, professionals, & the businesses.

The age of Large Language Models has just started. They provide huge opportunities for people who learn how they actually work.

Disclaimer: BFM Times acts as a source of information for knowledge purposes and does not claim to be a financial advisor. Kindly consult your financial advisor before investing.

What are Large Language Models LLMs?

Large Language Models are advanced AI systems trained on vast text data to understand generate and process human language.

How do Large Language Models work?

LLMs analyze patterns in massive datasets using deep learning to predict and generate meaningful text responses.

Where are Large Language Models commonly used?

LLMs are used in chatbots content generation translation coding assistance and virtual assistants.